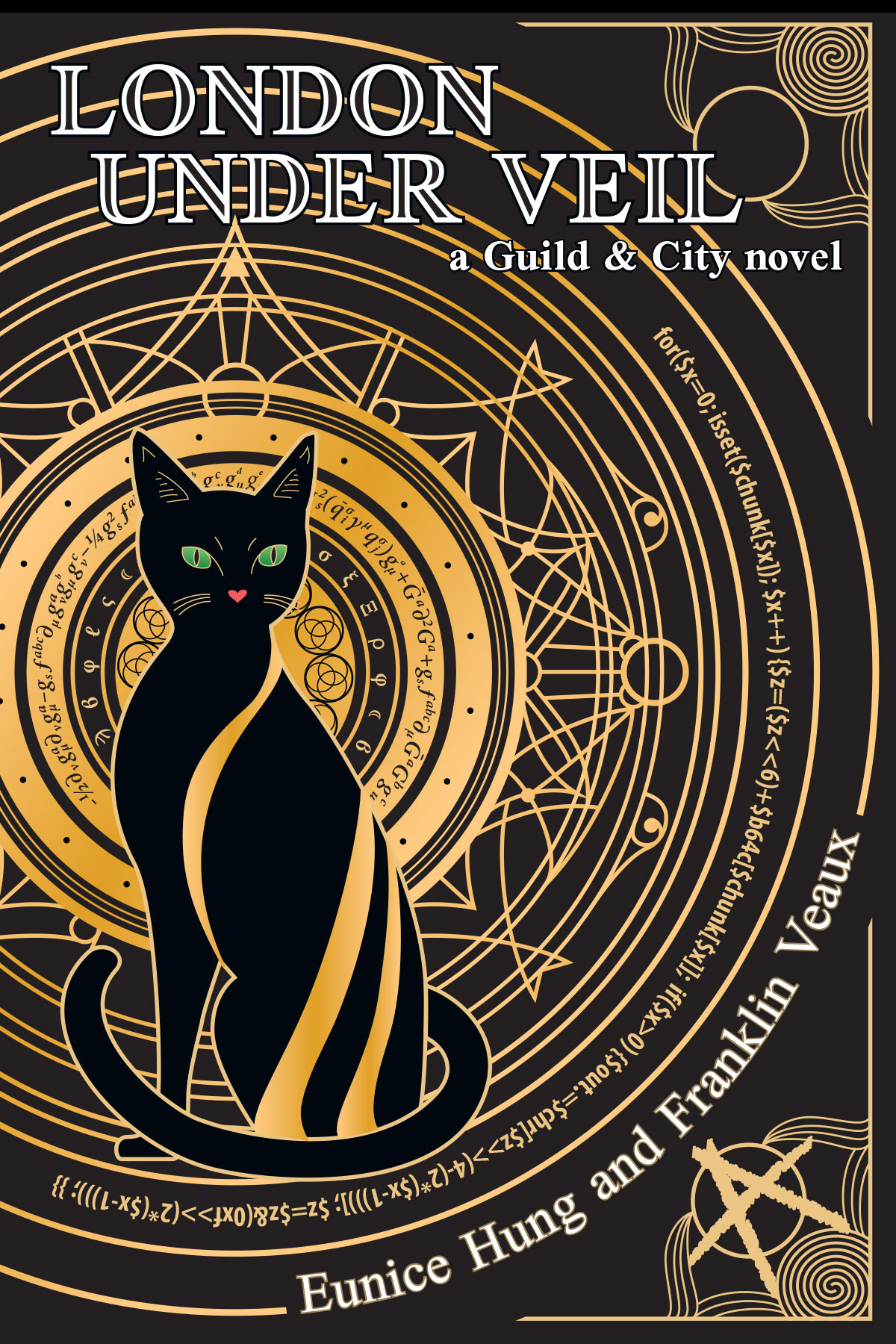

Eunice and I have written four novels and one collection of short stories in a far-future, post-scarcity world that emerged from a fantasy she had, a woman atop a ziggurat, strapped to an altar, given forced orgasms from sunup to sundown.

From that one image, an entire world, with fusion power and drones and near-Culture-level AIs and an entire society and religious system arose, the backdrop of five books (and counting!).

But that’s not what I’m here to talk about today. I want to talk about that big consumer magic show in Las Vegas.

So I ran across a question on the social media site Quora, What’s your go-to fantasy? And the thing is, I don’t have one of those. In fact, I kind of envy people who do…it must be nice to have something that always works for you, something that gets you off reliably.

My fantasy world is a weird place, where the thing that does it for me changes all the time. I answered the question with the fantasy that’s currently doing it for me right now:

The one that’s doing it for me right now involves me and one of my current real-life lovers going to that huge consumer magic expo in Las Vegas every year, you know the one I mean.

It’s always a rather dreary affair, giant corporations with a trillion-dollar market cap trying to convince you that this year’s new grimoires are, like, this radical new development in magic that’ll change the world when really they’re about the same spells as last year but with less mana requirements and maybe a bit less material components, or the new model scrying stones are some radical new earth-shaking invention when really they’re about the same as last year’s model but maybe with a bigger viewing crystal or something.

But hey, we’re there for work, and the hotel restaurant has real unicorn steaks (the kind where they dust the meat with powdered unicorn horn before they grill it so you get that tingle) and top-shelf fae cider, the kind that gets you high af and turns your eyes golden for a few hours…and it’s all expense accountable.

So anyway, we’re exploring the vendor hall when we find a little booth in the back advertising Eros magic, staffed by a cute but surly goth girl watching Shoot ’Em Up on Netflix on her iPad. (Yes, I know Netflix doesn’t have Shoot ’Em Up, it’s a fantasy, okay?)

The booth has all the normal tat you’d find at a place like that, love potions, lust amulets set in cheap brass jewelry, desire charms that have so little magic in them that you can resist the compulsion in your sleep. But we find some little black vials with a holographic moon on the label that look kind of interesting, and the sign on the display stand offers exquisite ecstasy beyond imagining, so we’re like, why not?

We get two of them and take them up to our room. The vials have those peel-to-open labels with all the instructions and contraindications and such printed in four-point type on the inside: do not take more than four doses in 24 hours, do not take if you’re allergic to pixie dust or succubus essence, yadda yadda yadda, not legal for sale or use in AR, MI, or AL, check state and local ordinances, blah blah blah…

So we both down the contents, halfway expecting a cheap gas-station aphrodisiac, something that makes you all frantic but leaves you with a hangover and itchy skin the next day, but this is not that.

It comes on slow, subtle at first, but absolutely irresistible, until I get an intoxicating buzz just looking at her. Every touch, however slight or fleeting, sends this long slow wave of indescribable ecstasy rippling through me. And kissing? Dear god, just the lightest touch of her lips is like the heavens open up and, for just that moment, I see the whole of the cosmos.

I won’t bore you with the rest, but yeah, that’s the fantasy that’s working for me right now.

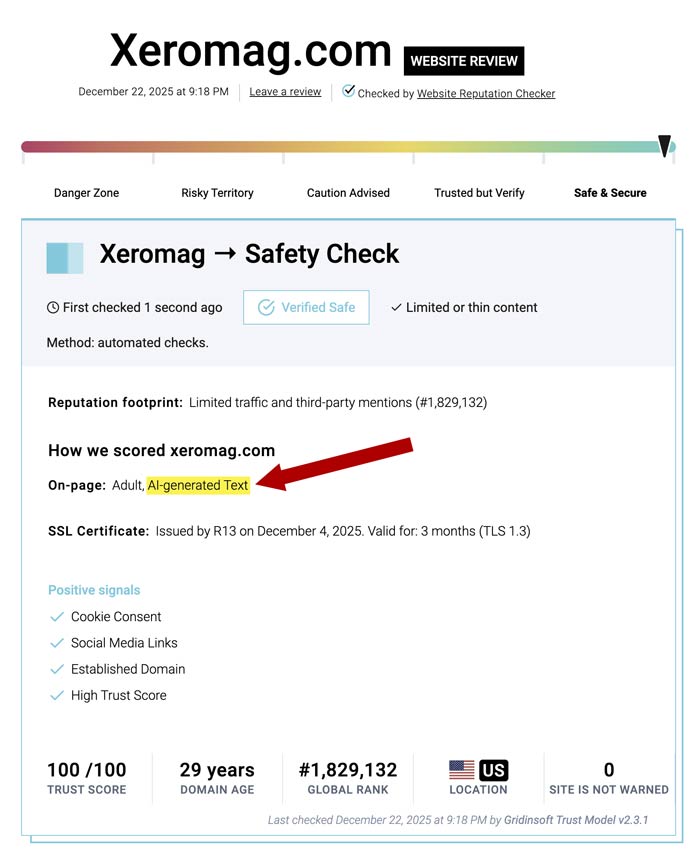

Here, have a ghastly AI generated image that probably accelerated global warming by three months, because that’s the time we live in.

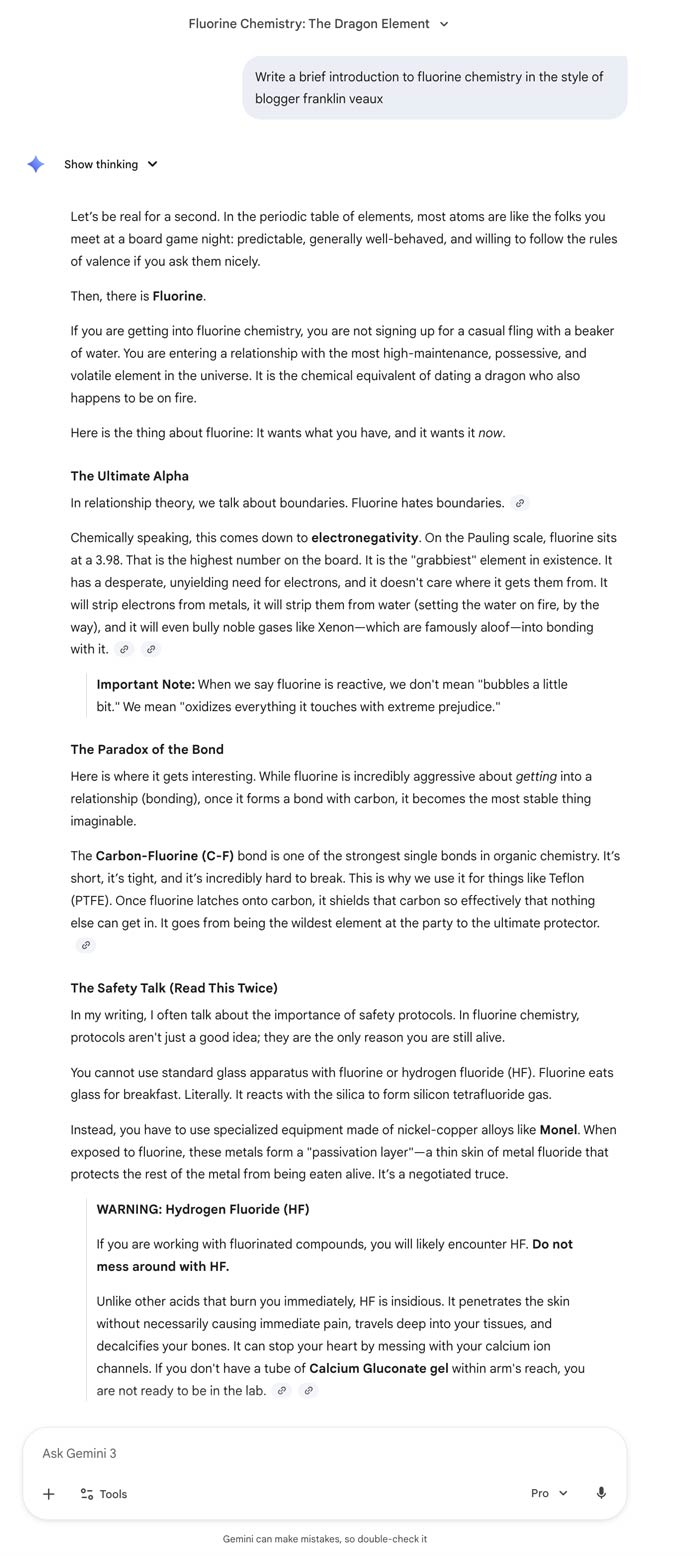

So anyway, there’s a guy I know from The Online who asked me, “Why does you need all these non-sexual details in your wank material? A lot of this context isn’t even very relevant to the foreplay.”

Which is a good question, one I started answering over on Quora before I realized it really needed a full fledged essay to answer.

Eunice and I share one thing in common: grunt-n-thrust doesn’t work for us. (In fact, this is something I share with my Talespinner as well; we’re currently a third of the way through co-authoring a hyperurban retrofuturist gangster noir novel that started as a sexual fantasy and became an entire world.)

There needs to be something beyond two (or more) people fucking. Who are they? Why are they fucking? Where are they fucking? What’s the context of the fucking they’re doing?

The context is actually, for me, part of what makes it hot. Every element of that fantasy changes the nature of the sex. So let’s look at it, and I’ll explain why.

Me and one of my current real-life lovers…

So this is about a rela person, someone who’s already an intimate partner.

…going to that huge consumer magic expo in Las Vegas every year, you know the one I mean.

Right away, this isn’t the real world. It’s a world where magic is real, and is as humdrum as electronics (which, seriously, are magic!) are here in this world.

It’s always a rather dreary affair, giant corporations with a trillion-dollar market cap trying to convince you…

So it’s this world’s equivalent of the Consumer Electronics Show. Right away that tells you even more about the world, but also that my lover and I are away from home. There’s something just a little extra about sex in a motel room, isn’t there?

But hey, we’re there for work,…

Which also adds an element of spice to the sex. Is this an illicit workplace tryst? Are we there from different companies? Dunno, but either way it changes the sex.

…and the hotel restaurant has real unicorn steaks (the kind where they dust the meat with powdered unicorn horn before they grill it so you get that tingle) and top-shelf fae cider, the kind that gets you high af and turns your eyes golden for a few hours…and it’s all expense accountable.

It’s a nice hotel, with an expensive restaurant that serves a high-end (and presumably very pricey) menu, but someone else is paying for it! Again, changes the nature of the tryst.

…we find a little booth in the back advertising Eros magic, staffed by a cute but surly goth girl watching Shoot ’Em Up on Netflix on her iPad.

Magic and consumer electronics are real. Oh, and the consumer electronics expo was, for a while, actually famous for having tons of little booths advertising sex toys, until the organizers actually changed the rules to ban them. (Why were they there? Because you get a whole bunch of people there on business because their companies made them go, far from home with hot co-workers or partners, it was A Thing™. Why did they get banned? They started overshadowing the big consumer electronics giants.)

…all the normal tat you’d find at a place like that, love potions, lust amulets set in cheap brass jewelry, desire charms that have so little magic in them that you can resist the compulsion in your sleep.

So basically the equivalent of those ridiculous penis pills or whatever, or cheap vibrators that break after the second use. And also, this is a world where recrational magic is a bit like recreational pharmaceuticals are in the real world.

But we find some little black vials with a holographic moon on the label that look kind of interesting, and the sign on the display stand offers exquisite ecstasy beyond imagining, so we’re like, why not?

We didn’t plan to investigate the tat at the little sex booth; this was a spontaneous decision. We didn’t come to the expo expecting to try some dodgy sex magic and shag. But we weren’t closed to it, clearly.

The vials have those peel-to-open labels with all the instructions and contraindications and such printed in four-point type on the inside: do not take more than four doses in 24 hours, do not take if you’re allergic to pixie dust or succubus essence, yadda yadda yadda, not legal for sale or use in AR, MI, or AL…

So these vials, whatever they are, occupy that legal limbo that cannabis products did for a while. Hmm, interesting. Means they probably legit have some effect, then.

It comes on slow, subtle at first, but absolutely irresistible, until I get an intoxicating buzz just looking at her. Every touch, however slight or fleeting, sends this long slow wave of indescribable ecstasy rippling through me. And kissing? Dear god, just the lightest touch of her lips is like the heavens open up and, for just that moment, I see the whole of the cosmos.

Fuuuck me, this is way more of an intense experience than either of us expected, and way, way better, too. We’re off in an expensive, swanky hotel room in an expensive, swanky hotel that neither of us is paying for, with an expensive, swanky restaurant serving from a menu that normally we’d never even consider buying from, and now we’re set to have this amazing sexual experience.

Every part of the fantasy informs the nature of what’s about to happen.

For me, when I say that I need the context and the setting to make a sexual fantasy work, that’s what I’m talking about. Whi is it with? Why are we shagging? How are we shagging? What informs the shagging? What sets the stage? What’s the context? Sex at home is different from sex in a hotel is different from sex while traveling to another country. Sex in the normal everyday world is different from sex in a dystopia where every sexual encounter is a subversive act is different from sex in a world where magic is real and is routinely used as part of the sex.

Everything changes the quality and timbre of sex. All these little background details influence the nature of the sex in the fantasy.