The United States is unusual among First World nations in the sense that we only have two political parties.

Well, technically, I suppose we have more, but only two that matter: Democrats and Republicans. They are popularly portrayed in American mass media as “liberals” and “conservatives,” though that’s not really true; in world terms, they’re actually “moderate conservatives” and “reactionaries.” A serious liberal political party doesn’t exist; when you compare the Democratic and Republican parties, you see a lot of across-the-board agreement on things like drug prohibition (both parties largely agree that recreational drug use should be outlawed), the use of American military might abroad, and so on.

A lot of folks mistakenly believe that this means there’s no real differences between the two parties. This is nonsense, of course; there are significant differences, primarily in areas like religion (where the Democrats would, on a European scale, be called “conservatives” and the Republicans would be called “radicalists”); social issues like sex and relationships (where the Democrats tend to be moderates and the Republicans tend to be far right); and economic policy (where Democrats tend to be center-right and Republicans tend to be so far right they can’t tie their left shoe).

Wherever you find people talking about politics, you find people calling the members of the opposing side “idiots.” Each side believes the other to be made up of morons and fools…and, to be fair, each side is right. We’re all idiots, and there are powerful psychological factors that make us idiots.

The fact that we think of Democrats as “liberal” and Republicans as “conservative” illustrates one ares where Republicans are quite different from Democrats: their ability to frame issues.

The American political landscape for the last three years by a great deal of shouting and screaming over health care reform.

And the sentence you just read shows how important framing is. Because, you see, we haven’t actually been discussing health care reform at all.

Despite all the screaming, and all the blogging, and all the hysterical foaming on talk radio, and all the arguments online, almost nobody has actually read the legislation signed after much wailing and gnashing into law by President Obama.

And if you do read it, there’s one thing about it that may jump to your attention: It isn’t about health care at all. It barely even talks about health care per se. It’s actually about health insurance. It provides a new framework for health insurance legislation, it restricts health insurance companies’ ability to deny coverage on the basis of pre-existing conditions, it seeks to make insurance more portable..in short, it is health insurance reform, not health care reform. The fact that everyone is talking about health care reform is a tribute to the power of framing.

In any discussion, the person who controls how the issue at question is shaped controls the debate. Control the framing and you can control how people think about it.

Talking about health care reform rather than health insurance reform leads to an image in people’s minds of the government going into a hospital operatory or a doctor’s exam room and telling the doctor what to do. Talking about health insurance reform gives rise to mental images of government beancounters arguing with health insurance beancounters about the proper way to notate an exemption to the requirements for filing a release of benefits form–a much less emotionally compelling image.

Simply by re-casting “health insurance reform” as “health care reform,” the Republicans created the emotional landscape on which the war would be fought. Middle-class working Americans would not swarm to the defense of the insurance industry and its über-rich executives. Recast it as government involvement between a doctor and a patient, however, and the tone changed.

Framing matters. Because people, by and large, vote their identity rather than their interests, if you can frame an issue in a way that appeals to a person’s sense of self, you can often get him to agree with you even if by agreeing with you he does harm to himself.

I know a woman who is an atheist, non-monogamous, bisexual single mom who supports gay marriage. In short, she hits just about every ticky-box in the list of things that “family values” Republicans hate. The current crop of Republican political candidates, all of them, have at one point or another voiced their opposition to each one of these things.

Yet she only votes Republican. Why? Because she says she believes, as the Republicans believe, that poor people should just get jobs instead of lazing about watching TV and sucking off hardworking taxpayers’ labor.

Yet she only votes Republican. Why? Because she says she believes, as the Republicans believe, that poor people should just get jobs instead of lazing about watching TV and sucking off hardworking taxpayers’ labor.

That’s the way we frame poverty in this country: poor people are poor because they are just too lazy to get a fucking job already.

That framing is extraordinarily powerful. It doesn’t matter that it has nothing to do with reality. According to the US Census Bureau, as of December 2011 46,200,000 Americans (or 15.1% of the total population) live in poverty. According to the US Department of Labor, 11.7% of the total US population had employment but were still poor. In other words, the vast majority of poor people have jobs–especially when you consider that some of the people included in the Census Bureau’s statistics are children, and therefore not part of the labor force.

Framing the issue of poverty as “lazy people who won’t get a job” helps deflect attention away from the real causes of poverty, and also serves as a technique to manipulate people into supporting positions and policies that act against their own interests.

But framing only works if you do it at the start. Revealing how someone has misleadingly framed a discussion after it has begun is not effective at changing people’s attention because of a cognitive bias called the entrenchment effect.

A recurring image in US politics is the notion of the “welfare queen”–a hypothetical person, invariably black, who becomes wealthy by living on government subsidies. The popular notion has this black woman driving around the low-rent neighborhood in a Cadillac, which she bought by having dozens and dozens of babies so that she could receive welfare checks for each one.

The notion largely traces back to Ronald Reagan, who during his campaign in 1976 talked over and over (and over and over and over and over) about a woman in Chicago who used various aliases to get rich by scamming huge amounts of welfare payments from the government.

The problem is, this person didn’t exist. She was entirely, 100% fictional. The notion of a “welfare queen” doesn’t even make sense; having a lot of children but subsisting only on welfare doesn’t increase your standard of living, it lowers it. The extra benefits given to families with children do not entirely offset the costs of raising children.

Leaving aside the overt racism in the notion of the “welfare queen” (most welfare recipients are white, not black), a person who thinks of welfare recipients this way probably won’t change his mind no matter what the facts are. We all like to believe ourselves to be rational; we believe we have adopted our ideas because we’ve considered the available information rationally, and that if evidence that contradicts our ideas is presented, we will evaluate it rationally. But nothing could be further from the truth.

In 2006, two researchers at the University of Michigan, Brendan Nyhan and Jason Reifler, did a study in which they showed people phony studies or articles supporting something that the subjects believed. They then told the subjects that the articles were phony, and provided the subjects with evidence that showed that their beliefs were actually false.

The result: The subjects became even more convinced that their beliefs were true. In fact, the stronger the evidence, the more insistently the subjects clung to their false beliefs.

This effect, which is now referred to as the “entrenchment effect” or the “backfire effect,” is very common among people in general. A person who holds a belief who is shown hard physical evidence that the belief is false comes away with an even stronger belief that it is true. The stronger the evidence, the more firmly the person holds on.

The entrenchment effect is a form of “motivated reasoning.” Generally speaking, what happens is that a person who is confronted with a piece of evidence showing that his beliefs are wrong will respond by mentally going through all the reasons he started holding that belief in the first place. The stronger the evidence, the more the person repeats his original line of reasoning. The more the person rehearses the original reasoning that led him to the incorrect belief, the more he believes it to be true.

This is especially true if the belief has some emotional vibrancy. There is a part of the brain called the amygdala which is, among other things, a kind of “emotional memory center.” That’s a bit oversimplified, but essentially true; when you recall a memory that has an emotional charge, the amygdala mediates your recall of the emotion that goes along with the memory; you feel that emotion again. When you rehearse the reasons you first subscribed to your belief, you re-experience the emotions again–reinforcing it and making it feel more compelling.

This is especially true if the belief has some emotional vibrancy. There is a part of the brain called the amygdala which is, among other things, a kind of “emotional memory center.” That’s a bit oversimplified, but essentially true; when you recall a memory that has an emotional charge, the amygdala mediates your recall of the emotion that goes along with the memory; you feel that emotion again. When you rehearse the reasons you first subscribed to your belief, you re-experience the emotions again–reinforcing it and making it feel more compelling.

This isn’t just a right/left thing, either.

Say, for example, you’re afraid of nuclear power. A lot of people, particularly self-identified liberals, are. If you are presented with evidence that shows that nuclear power, in terms of human deaths per terawatt-hour of power produced, is by far the safest of all forms of power generation, it is unlikely to change your mind about the dangers of nuclear power one bit.

The most dangerous form of power generation is coal. In addition to killing tens of thousands of people a year, mostly because of air pollution, coal also releases quite a lot of radiation into the environment. This radiation comes from two sources. First, some of the carbon that coal is made of is in the naturally occurring radioactive isotope carbon-14; when the coal is burned, this combines with oxygen to produce radioactive gas that goes out the smokestack. Second, coal beds contain trace amounts of radioactive uranium and thorium, which remain in the ash when it’s burned; coal plants consume so much coal–huge freight trains of it–that the resulting fly ash left over from burning those millions of tons of coal is more radioactive than nuclear waste. So many people die directly or indirectly as a result of coal-fired power generation that if we had a Chernobyl-sized meltdown every four years, it would STILL kill fewer people than coal.

If you’re afraid of nuclear power, that argument didn’t make a dent in your beliefs. You mentally went back over the reasons you’re afraid of nuclear power, and your amygdala reactivated your fear…which in turn prevented you from seriously considering the idea that nuclear might not be as dangerous as you feel it is.

If you’re afraid of nuclear power, that argument didn’t make a dent in your beliefs. You mentally went back over the reasons you’re afraid of nuclear power, and your amygdala reactivated your fear…which in turn prevented you from seriously considering the idea that nuclear might not be as dangerous as you feel it is.

If you’re afraid of socialism, then arguments about health reform won’t affect you. It won’t matter to you that health care reform is actually health insurance reform, or that the supposed “liberal” health care reform law was actually mostly written by Republicans (many of the health insurance reforms in the Federal package are modeled on similar laws written by none other than Mitt Romney; the provisions expanding health coverage for children were written by Republican senator Orrin Hatch (R-Utah); and the expansion of the Medicare drug program were written by Republican Representative Dennis Hastert (R-Illinois)), or that it’s about as Socialist as Goldman-Sachs (the law does not nationalize hospitals, make doctors into government employees, or in any other way socialize the health care infrastructure). You will see this information, you will think about the things that originally led you to see the Republican health-insurance reform law as “socialized Obamacare,” and you’ll remember your emotional reaction while you do it.

Same goes for just about any argument with an emotional component–gun control, abortion, you name it.

This is why folks on both sides of the political divide think of one another as “idiots.” That person who opposes nuclear power? Obviously an idiot; only an idiot could so blindly ignore hard, solid evidence about the safety of nuclear power compared to any other form of power generation. Those people who hate Obamacare? Clearly they’re morons; how else could they so easily hang onto such nonsense as to think it was written by Democrats with the purpose of socializing medicine?

Clever framing allows us to be led to beliefs that we would otherwise not hold; once there, the entrenchment effect keeps us there. In that way, we are all idiots. Yes, even me. And you.

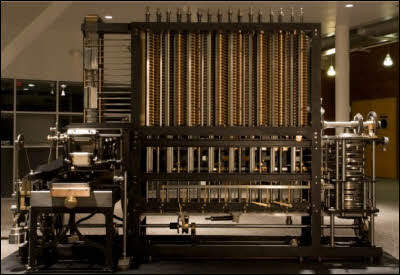

Babbage reasoned–quite accurately–that it was possible to build a machine capable of mathematical computation. He also reasoned that it would be possible to construct such a machine in such a way that it could be fed a program–a sequence of logical steps, each representing some operation to carry out–and that on the conclusion of such a program, the machine would have solved a problem. Ths last bit differentiated his conception of a computational engine from other devices (such as the Antikythera mechanism) which were built to solve one particular problem and could not be programmed.

Babbage reasoned–quite accurately–that it was possible to build a machine capable of mathematical computation. He also reasoned that it would be possible to construct such a machine in such a way that it could be fed a program–a sequence of logical steps, each representing some operation to carry out–and that on the conclusion of such a program, the machine would have solved a problem. Ths last bit differentiated his conception of a computational engine from other devices (such as the Antikythera mechanism) which were built to solve one particular problem and could not be programmed. Now, when I was in school studying neurobiology, things were very simple. You had two kinds of cells in your brain: neurons, which did all the heavy lifting involved in the process of cognition and behavior, and glial cells, which provided support for the neurons, nourished them, repaired damage, and cleaned up the debris from injury or dead cells.

Now, when I was in school studying neurobiology, things were very simple. You had two kinds of cells in your brain: neurons, which did all the heavy lifting involved in the process of cognition and behavior, and glial cells, which provided support for the neurons, nourished them, repaired damage, and cleaned up the debris from injury or dead cells.

Right now, most attempts to model the brain look only at the neurons, and disregard the glial cells. Now, there’s value to this. The brain is really (really really really) complex, and just developing tools able to model billions of cells and hundreds or thousands of billions of interconnections is really, really hard. We’re laying the foundation, even with simple models, that lets us construct the computational and informatics tools for handling a problem of mind-boggling scope.

Right now, most attempts to model the brain look only at the neurons, and disregard the glial cells. Now, there’s value to this. The brain is really (really really really) complex, and just developing tools able to model billions of cells and hundreds or thousands of billions of interconnections is really, really hard. We’re laying the foundation, even with simple models, that lets us construct the computational and informatics tools for handling a problem of mind-boggling scope.

A quick recap for folks who are not neurology geeks: The corpus callosum is a thick bundle of nerves that connects the left and right hemispheres of the brain. If this is damaged or cut, as used to be done to treat a certain kind of epilepsy, the hemispheres can’t communicate directly with each other. Each hemisphere controls one-half of the body and sees one-half of the visual field, but language usually exists only in one hemisphere, not both; when the corpus callosum is cut, it’s almost like you have two different brains in one body, but only one of the two can talk.

A quick recap for folks who are not neurology geeks: The corpus callosum is a thick bundle of nerves that connects the left and right hemispheres of the brain. If this is damaged or cut, as used to be done to treat a certain kind of epilepsy, the hemispheres can’t communicate directly with each other. Each hemisphere controls one-half of the body and sees one-half of the visual field, but language usually exists only in one hemisphere, not both; when the corpus callosum is cut, it’s almost like you have two different brains in one body, but only one of the two can talk. In the book

In the book  “You voted for McCain because you’re a religious zealot who wants to see the government overthrown and replaced with a totalitarian militant theocracy.” “Oh, yeah? You voted for Obama because you’re an anti-capitalist tree-hugger who wants to destroy private enterprise!” This is what happens when we think we can tell what people are by looking only at what they did, and it’s an embarrassment.

“You voted for McCain because you’re a religious zealot who wants to see the government overthrown and replaced with a totalitarian militant theocracy.” “Oh, yeah? You voted for Obama because you’re an anti-capitalist tree-hugger who wants to destroy private enterprise!” This is what happens when we think we can tell what people are by looking only at what they did, and it’s an embarrassment. This is prolactin. It’s a hormone produced by human beings in the breast during breast feeding (it causes the production of milk) and in the brain during orgasm. As is typical with many hormones, it serves double duty and has a number of different roles; evolutionary biology never starts with a clean slate, so we get hormones in one part of the body repurposed to do something completely different in another part of the body (and we also get fucked-up design night mares like the knee…but I digress).

This is prolactin. It’s a hormone produced by human beings in the breast during breast feeding (it causes the production of milk) and in the brain during orgasm. As is typical with many hormones, it serves double duty and has a number of different roles; evolutionary biology never starts with a clean slate, so we get hormones in one part of the body repurposed to do something completely different in another part of the body (and we also get fucked-up design night mares like the knee…but I digress).