As I type this, Elon Musk says the release of true full self-driving cars is perhaps months away. Leaving aside his…notable overoptimism, this would arguably be among the crowning achievement of computer science so far.

Yes, even more than going to the moon. Going to the moon required remarkably little in the way of computer science—orbital mechanics are complicated, sure, but not that complicated, and there are few unexpected pedestrians twixt hither and yon.

We are moving into a world utterly dominated by computers. And yet…there’s far too little attention, I think, paid to the ethical implications of that.

Probably the biggest ethical issue I see in computer science right now concerns training sets for machine learning.

I don’t mean machine learning like self-driving cars, though that’s a monstrous problem of its own—the thing about ML systems is they’re basically black boxes that most definitively do NOT see the world the way we do, so they can become confused by adversarial inputs, like this strange sticker that makes a Tesla see a stop sign as a Speed Limit 45 sign:

And of course ownership of these enormous ML systems opens whole cans, plural, of worms just by itself. If you use terabytes of public domain data to train a proprietary ML system, what responsibility do you have to make it available to the people who produced your training data, and what liability do you have when your system goes wrong?

And of course deepfake software can produce photos and video of you in a place you’ve never been hanging out with people you don’t know saying things you’ve never said, and make it all totally believable. Look for that to cause trouble soon.

But those are just fringe problems, minor ethical quibbles compared to the elephant in the room: bias in data sets.

Legal societies are putting more and more reliance on ML systems. Police use machine learning for facial recognition (and now, gait recognition, recognizing people by the way they walk instead of their facial features—yes that’s a thing).

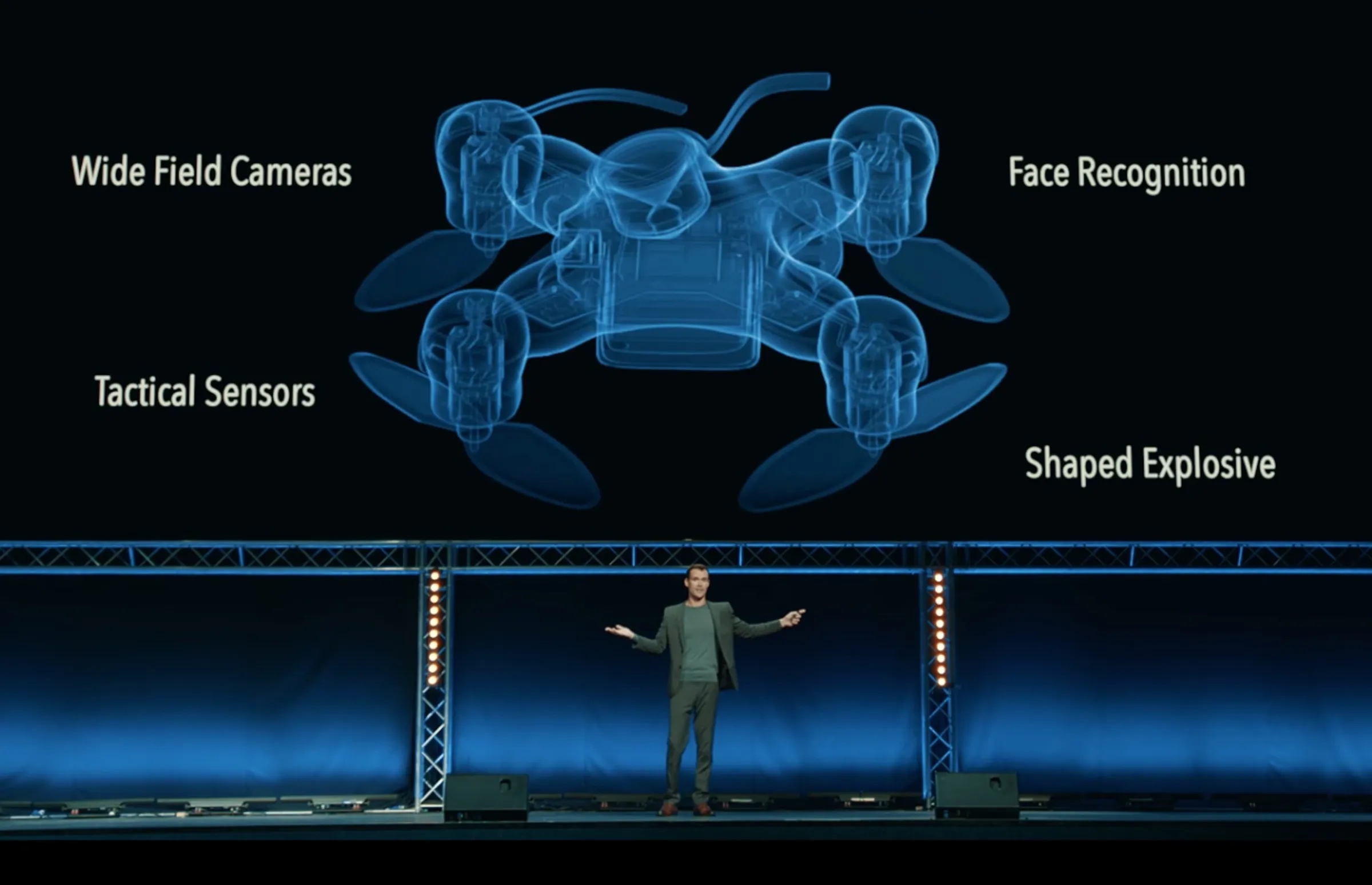

Militaries use facial recognition in weapons platforms. Right now they don’t rely in it, but use it as an adjunct to more traditional intelligence, but the day is coming when foreign military targets will be designated entirely by things like facial recognition systems.

Problem is, those ML systems tend to be racist AF.

I’m not kidding.

ML systems rely on training with positively gargantuan training data sets. You train a machine to recognize faces by feeding it millions—or, if you can, tens or hundreds of millions—of pictures of people’s faces.

The researchers who define and build these systems tend to turn to the Internet for their training data, trolling Internet social media sites with bot software that hoovers up all the photos it can find for training data.

Let’s ignore for a moment the copyright implications. Let’s ignore the ethical issues of treating people’s property as fodder for surveillance systems. Let’s just quietly ignore all those ethical problems so we can talk about racism.

People who post lost of photos on the Internet that get scooped up to train ML systems aren’t an even demographic slice of humanity. They tend to have more money than average, be Western Europeans or North Americans, and tend to be white.

That means ML systems to do things like facial recognition are trained on data sets that are overwhelmingly…white faces.

And that means these systems—even commercial systems now deployed and used by police—suck at identifying black or Asian faces.

Like sometimes really suck.

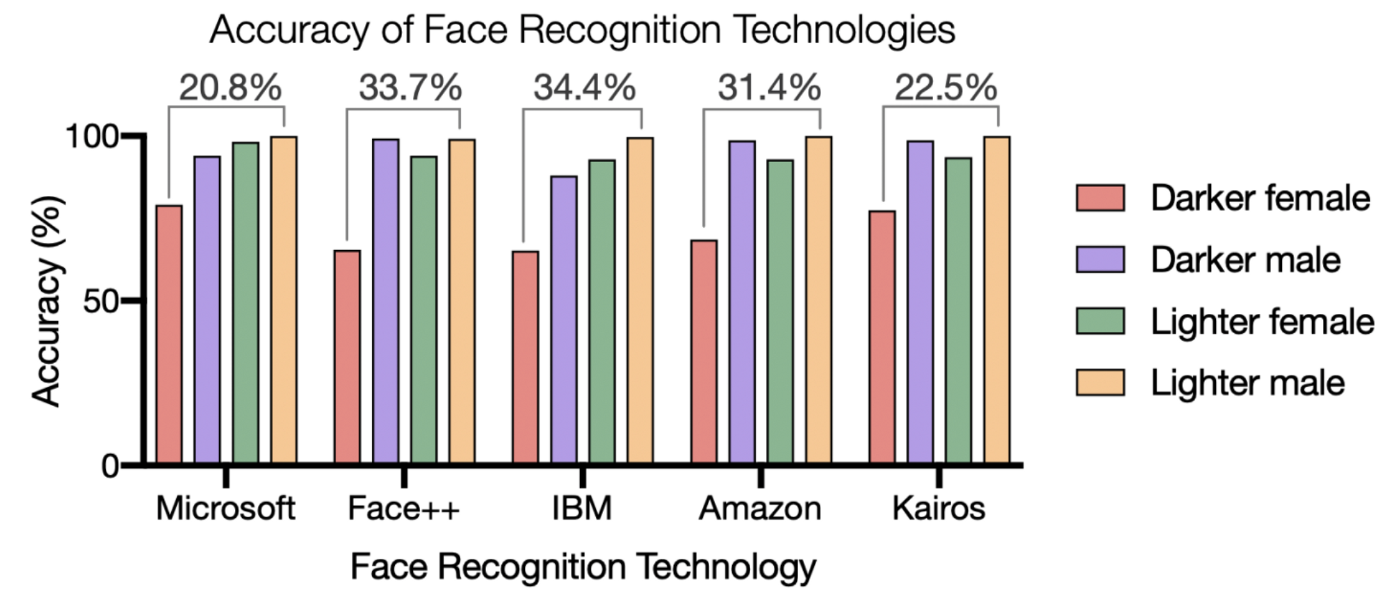

Like, as of 2018, commercial facial recognition systems had a recognition failure rate on white male faces of 0.2%, and a failure rate on black female faces of more than 22%.

If that isn’t ringing alarm bells in your head, you’re not fully comprehending the magnitude of the problem.

Here’s a rather frightening quote:

Last year, the American Civil Liberties Union (ACLU) found that Amazon’s Rekognition software wrongly identified 28 members of Congress as people who had previously been arrested. It disproportionately misidentified African-Americans and Latinos.

https://www.theguardian.com/technology/2019/jul/29/what-is-facial-recognition-and-how-sinister-is-it

We train AI and ML systems with data from the real world…and we get deeply racist, profoundly flawed AI and ML systems as a result.

Then we use these AI and ML systems to identify people for arrest or to send autonomous suicide drones.

Any machine learning system is only as good as the training data it’s developed with. And when that training data is a snapshot of the Internet, well…

This blog post was adapted from an answer I wrote on Quora. Want more like this? Follow me on Quora!